Mark Maier (mmaier@chapman.edu) is the Founding Chair of the Leadership Programs at Chapman University in Orange, California, USA.

Author’s note: Revisiting NASA’s space shuttle disasters

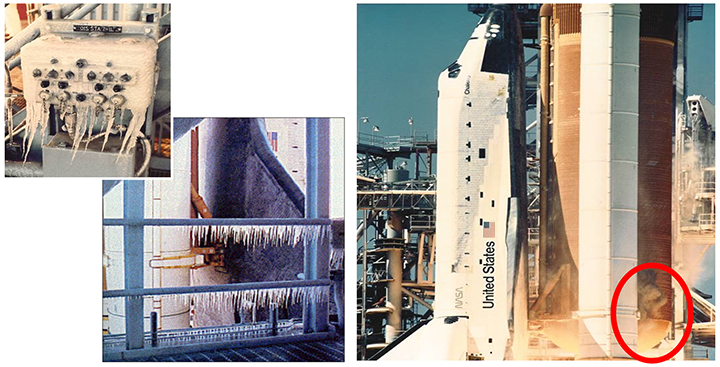

On January 28, 1986, the National Aeronautics and Space Administration’s (NASA) Space Shuttle Challenger exploded just 73 seconds into flight, killing all seven crew members, including America’s first civilian in space, an effervescent schoolteacher from New Hampshire named Christa McAuliffe. Repeated warnings by NASA and contractor engineers about the dangers associated with the fragile rubber rocket seals — known as O-ring seals — in the massive twin rocket boosters, particularly at colder temperatures, were downplayed and/or went unheeded, all under unrelenting pressure to meet an accelerating flight schedule. The temperature at liftoff was 15 degrees colder than any previous flight, and the fragile O-ring seals hardened in the cold air and vaporized. It was, as the 1986 Rogers Commission investigating the tragedy concluded, “an accident waiting to happen.”

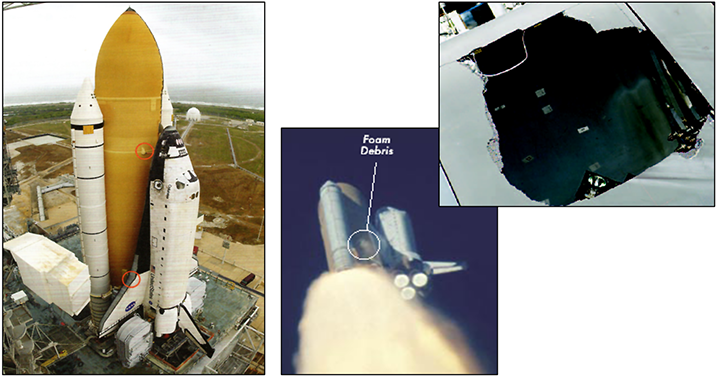

Seventeen years later, on the morning of February 1, 2003, Space Shuttle Columbia was on its return from space to the Kennedy Space Center in Florida when hot gases began to penetrate the thin aluminum shell of the spacecraft at the same spot where a large piece of dislodged foam had slammed into the underside of the shuttle’s left wing during its ascent 16 days earlier. At 200,000 feet above the earth, the shuttle disintegrated over Texas, killing all seven astronauts aboard. The calamity had been predicted with eerie precision by NASA engineers in the days immediately following the foam hit. Their requests to keep the astronauts in space long enough to launch a rescue mission and bring them safely home were overruled by shuttle program management, whose overriding concern was to maintain the ambitious flight rate and budget required to complete a key section of the International Space Station “on time.”

Though separated by 17 years, these tragedies were strikingly similar in their institutional origins, and both events could — and should — have been prevented.

“For a successful technology, reality must take precedence over public relations, for nature cannot be fooled.” —Dr. Richard Feynman, Nobel Prize-winning physicist and member of the 1986 Rogers Commission on the Space Shuttle Challenger Accident[1]

Doing the same thing over and over again and expecting a different outcome: a well-known definition of insanity.

“Those who cannot remember the past are condemned to repeat it.” —George Santayana

Drawing on dozens of seminars and workshops conducted for managers that I led in partnership with two of the principals involved in the Challenger launch decision, Roger Boisjoly and Allan McDonald, it is important to stress that you (like the participants in our sessions) are likely to be simultaneously a leader and a follower in your system, and that the leadership challenges you face in recognizing and resolving the inherent ethical tensions that frequently arise between “maintaining your business” and “doing the right thing” are relevant from both sides of this divide.[2]

Out of the approximately 2,500 professionals whom Boisjoly, McDonald, and I served in these workshops, rarely did any identify with “the power people” (i.e., the positional leaders) in the NASA scenarios we presented. Further, not one single participant in any of these workshops—from entry-, to middle-, to senior-, and even executive-level management—has ever agreed that they prefer misleading or deceptive input from their direct reports or favor the deliberate withholding of critical information caused by the subordinate’s fear that the information would be “unwelcome.” (Think for a moment: How would you respond if someone ever asked you, “Would you like my dishonest opinion?”)

Similarly, the participants have insisted that they would prefer to be told what they need to know instead of what subordinates think they would like to hear. They all agree that wishful thinking does not constitute a viable strategy for success. These hundreds of managers over a 30-year span agree that, ultimately, the only true bad news in a system is the information they don’t hear about, which they are thus unable to confront and correct. So, intuitively, all of these folks identify as leaders who have a duty to be the “hearer” of bad news. And yet…

When the workshop roles are switched and they feel called upon to be the bearers of an inconvenient truth—defined as a truth that is likely (or presumed) to pose an immediate threat to the attainment of an organization’s goals or expressed objectives—a dilemma emerges. When presented with the Challenger and Columbia case studies, nearly all of them are more likely to identify with the subordinates (i.e., the positional followers). Consequently, they struggle with the implications of facing the realities honestly and having the courage to speak truth to power. The typical quandary they bring up is how their system, organization, or superiors make it difficult to stand up and speak the truth as they see it in the face of anticipated resistance or outright rejection from their bosses and/or a major client/customer. Typical rationalizations they use to justify acquiescing to deception or outright lies are: “I had no choice,” “My hands were tied,” “The system made me do it,” “My job (or ‘our contract’) was at stake,” and, “Economics dictated our response.” Even if they are in reality in the highest echelons of their organizations, they instinctively relate to being in a subordinate position, not a supervisory position.

In other words, the ability to recognize and face reality as it is, not as we would like it to be, or to speak the truth can be directly compromised by organizational norms driven by an overriding concern with success, either personal (e.g., advancement) or organizational (i.e., meeting projected/announced goals, keeping costs down, and maximizing the bottom line), which reinforce a rigid respect for the hierarchy and pleasing one’s boss or customer, especially in the short term. Bearers of bad news do not want to risk being seen as not a team player, disloyal, or—at worst—insubordinate and therefore ignored or dismissed.

Another striking result from these seminars is when we introduced the subject matter and asked them, “If you were a decision-maker in the Challenger chronology and could foresee that your decisions would lead to this ultimate outcome (disaster and death), how many of you would make a different choice?” They all raised their hands. They also were quick to criticize the question, saying that we can’t know such an outcome with absolute certainty. Touche!

This is a central point: We can no longer prevent the Challenger and Columbia disasters and the death of 14 crew members. We cannot turn back the clock; what’s done is done. But we can revisit the incidents to recognize the choices made by the real decision-makers that took them over the precipice and—in recognizing those steps and their all-too-familiar origins—commit ourselves to preventing such mistakes in order to avoid “launching a Challenger” in our own careers.

As you will see, Challenger and Columbia serve as virtual proxies or metaphors for organizational processes and managerial practices everywhere. Next to averting the actual tragedies, the best thing we can do to honor the memory of those who perished as a direct result of the deliberate, ill-advised, misinformed, and predictable decisions of our managerial kin is to commit ourselves to replacing the dysfunctional managerial script that condemned them to driving these horrific outcomes with a model that ensures adherence to a different mindset, different processes, and different outcomes. And at the risk of oversimplifying, I will chronicle a few of the more salient lies, fabrications, and deceptions embedded in the history of the shuttle program, focusing on the pivotal and commonplace managerial dynamics and decisions that doomed the Challenger and Columbia missions (see Figure 1*).

The aim of this article is simple: To (1) help you maintain the highest standards of integrity when confronted with ethical dilemmas at work; (2) equip you with the courage to address the truth (or reality); and (3) for those in leadership positions, encourage you to drive the creation of an environment where subordinates can, and will, tell their leaders the truth.

The insights provided here—the culmination of three decades of input from hundreds of managers like yourself—will hopefully challenge and inspire you to prevent the “launching of a Challenger” on your watch in your organization and in your lifetime.

A tale of two tragedies: The Challenger and Columbia disasters

“Did they learn NOTHING from us? Columbia evidenced the SAME pressures, the SAME communication breakdowns, the SAME management dynamics we faced on Challenger!” shared Brian Russell, an exasperated former Thiokol engineer.[3] Indeed, the disasters were separated by 17 years, but their underlying origins were so strikingly similar that the Columbia Accident Investigation Board (CAIB) devoted an entire chapter of their report, called “History as Cause: Columbia and Challenger,” to the subject, opening with what they deemed were “echoes of Challenger.”[4] The key shared origins identified in the CAIB report were: (1) schedule and cost pressure, (2) bureaucratic norms and hierarchy, and (3) communication failures exacerbated by organizational structure.

On the face of it, the two events were different in nature. Where the primary technical concern for Challenger in 1986 was a pre-launch assessment of whether the fragile rubber Solid Rocket Booster (SRB) O-rings would seal properly due to the coldest temperatures ever anticipated for a shuttle liftoff (29°F), the question for Columbia in 2003 involved a post-launch assessment of whether a shedding piece of foam insulation from the external tank (ET) had caused crippling damage when it hit the left wing during the shuttle’s successful ascent into space. What brings these two issues in parallel with each other is that, significantly, there had been long-standing experience with both SRB O-ring erosion and the foam shedding, though neither condition was apparently acceptable according to official shuttle design criteria and specifications. Further, both the Challenger and Columbia cases had additional significant similarities that ultimately led to the disasters, as Allan McDonald has pointed out:[5]

-

Both were preventable

-

Both resulted from well-known problems

-

In both cases, no temporary fixes or constraints were imposed

-

Both were launched in high wind shears aloft

-

In both cases, engineers correctly identified the problems

-

In both cases, engineers’ concerns were ignored, overruled, or dismissed

-

NASA center management failed to identify or communicate the seriousness of the engineers’ concerns to the Mission Management Team

-

In both cases, the NASA hierarchy discouraged dissent within NASA and the contractors

-

In both cases, NASA management allowed safety concerns to take a back seat to costs and schedule

Schedule, cost, and publicity pressure

At the time of the Challenger 51-L Mission in 1986, NASA was attempting to accelerate the flight rate from nine missions in 1985 to 15 missions in 1986, and increasing it further to 24 missions (two per month) by 1990.[6] Successfully launching Challenger by January 28 would prove they could fly the requisite two shuttles per month—an overly ambitious and unrealistic target, as NASA and the nation eventually painfully discovered—but that was far, far below the original flight projections, which had promised “up to 60 missions a year” in order to secure national support and federal funding in the late 1970s.[7] Another pressure that further exacerbated the high-profile nature of the Challenger mission was the selection of a school teacher from New Hampshire, Christa McAuliffe, to serve as NASA’s first “civilian in space.” McAuliffe’s participation in the Challenger mission was a public relations boon for NASA, helping the organization to gain renewed support from a public grown jaded to routine shuttle flights in low-earth orbit.

Seventeen years later, former budget director Sean O’Keefe would be appointed to lead NASA, with a promise it could set a schedule it would meet and keep within its prescribed budget. The pressure was mounting, however, to complete the “Node 2” section of the International Space Station—later renamed “Harmony”—by February 4, 2004, even though it had become evident by January 2003 to all but the highest levels of management that the goal was unattainable. Regular launch delays had already stretched the system to its breaking point: a third shift had been added so flight preparations could continue around the clock, vacation time was cancelled, and in an effort to minimize flight turnaround and shuttle refurbishment time, all shuttle flights were directed to land at the Kennedy Space Center (KSC) in Florida.

Lastly, neither O-rings (Challenger) nor foam shedding (Columbia) had yet caused a shuttle to fail, so agency and contractor managers flatly assumed they were not flight safety issues. NASA and Morton Thiokol (NASA contractor and the maker of the O-rings) were concerned about the persistent erosion issues on the O-ring seals—they were a scant ¼″ thick and eroded when exposed even for an instant to the hot (i.e., 5,600°F) gas— knowing that if hot gas impingement continued for longer than 300 milliseconds—faster than you can blink your eyes—the O-rings would have a “high probability” of failure. “We were lucky, just dumb-ass lucky, that we had not had a disaster like this before,” NASA Launch Official Larry Mulloy confided to me in a personal interview in 1991.[8]

On the surface, it may appear perfectly logical to fly and expand your tolerances based on your experience base. However, the willingness of NASA and contractor management to gradually increase the limits of tolerance had the de facto effect of creating a situation in which they gradually increased the risk. That is, each time you perform a risky action and get away with it, you assume your choice to do so was correct, so you are lulled into a false sense of security and complacency. This is what Dr. Richard Feynman, member of the 1986 Presidential Commission on the Space Shuttle Challenger Accident, referred to as “playing Russian roulette”[9] with the shuttle: instead of abiding by the initial criteria for component performance, the only way to effectively prove that the components are failing is to fly past their breaking points and blow a shuttle up.

In both disasters, the mistaken calculation was that the problem was not acute, that it would be resolved in time, and that flights could continue while the search for the cause—and the fix—were underway. In both post-disaster analyses, the unrelenting pressure to meet an accelerating flight schedule was singled out as a critical culprit that influenced the management decisions, which deliberately and inexorably—if unintentionally—led to the death of seven astronauts in each scenario.

The triumph of bureaucracy and hierarchy over expert judgment: ‘The system made me do it’

The CAIB report pointedly highlighted the disastrous implications of another common feature of the classic managerial/organizational mindset: “NASA’s culture of bureaucratic accountability emphasized chain of command, procedure, following the rules, and going by the book.…had an unintended but negative effect. Allegiance to hierarchy and procedure had replaced deference to NASA [and contractor] engineers’ technical expertise.”[10]

To help you keep track of the players in this managerial drama, refer to Appendix 1 for a summary of NASA’s matrix organizational structure.[11]

‘Believing in the system more than in the people’

In response to Thiokol Space Shuttle Solid Rocket Motor (SRM) Project Director Allan McDonald’s request “for an engineering assessment, a technical recommendation” on whether the O-rings could hold at the record extreme low temperatures predicted for Challenger’s launch the following morning, an evening telephone conference meeting was held on January 27, 1986 (see Appendix 2[12] and the audio clips featuring McDonald explaining (1) how the telecon originated in the first place[13] and (2) the basis for his insistence on “a technical recommendation.”)[14] The previous coldest launch (53°F) had taken place just one year before, in January 1985, and had experienced the worst instance of O-ring erosion in the fragile Rocket Booster seals. The predicted temperature range for the Challenger launch on January 28 was 29°F–38°F, far outside the prior envelope of experience (see Figure 2). “No,” Thiokol officially declared at the meeting, “We recommend against launching the Challenger tomorrow.” It was the first time in the entire history of space flight that a contractor was recommending against a launch, and Thiokol fully expected NASA to abide by its conservative pro-safety recommendation. But that did not happen.

NASA’s Mulloy, Thiokol’s direct launch interface with the agency and SRB program manager operating out of the Marshall Space Flight Center and at the Kennedy Space Center for the launch itself, forcefully rejected Thiokol’s recommendation. Mulloy argued that no specific launch commit criteria (i.e., the criteria that must be met in order for the launch of a space shuttle to happen) existed on shuttle joint temperature and was concerned for the precedent that the cancellation would set to affect future missions: “My God, Thiokol! When do you want us to launch? Next April?! The eve of a flight is a hell of a time to be generating new Launch Commit Criteria!” Ironically, his emotional outburst followed on the heels of his chastising Thiokol for relying on “qualitative data” and “handwringing emotion” for its no-launch position. During his Presidential Commission testimony on the Challenger explosion, Mulloy offered that they had “successfully flown with the existing Launch Commit Criteria 24 previous times.”

This logic seems reasonable on its face, but it ignores the fact that Mulloy had played a direct part in preventing the launch commit criteria from being modified not once but twice in the 11 months prior to Challenger. The first instance occurred following the January 1985 (53°F) launch, when Thiokol’s post-flight inspection uncovered an alarming extent of O-ring distress on two field joints. Boisjoly, the top expert on the O-ring seals who had conducted the inspection, explained to me, “I nearly had cardiac arrest and fell off the 16-foot ladder during the post-flight inspection of the recovered booster at Kennedy. It was much, much more severe than anything we had seen before.” Boisjoly prepared the flight review briefing chart for Thiokol, presented by McDonald, which also emphasized an unprecedented new danger zone in discerning that the backup O-ring in the booster had been affected by hot gas: “First time heat effect on secondary O’ring has been observed.”

Thiokol, a Level IV contractor and NASA’s sole-source supplier for shuttle Rocket Boosters, concluded in its flight review briefing to NASA’s Marshall Space Flight Center (Level III) on February 8 that “low temperature enhanced probability: [the January ’85 flight] experienced the worst case temperature change in Florida history.” When Mulloy passed the assessment up to Level II four days later, after Marshall had reviewed it, the references to the unprecedented heat effect on the secondary O-ring and any reference to cold temperatures had been deliberately excised. (The key post-flight briefing charts evaluating these erosion effects on Mission 51-C are provided in Appendix 3a.) The second instance of Marshall withholding or manipulating flight evidence was just five months prior to the Challenger failure, in August 1985, when Mulloy insisted that Thiokol remove an explicit reference that “lower temperatures aggravate the problem” in a briefing to NASA headquarters specifically on “Erosion of SRM Pressure Seals.”[15]

If, therefore, there truly was no specific launch commit criteria on joint temperature—which some experts, including McDonald, question—then it was thanks to Mulloy’s own intervention to ensure that it was so. McDonald would tell me in 1991 that Mulloy was “believing in the system more than in the people inputting into that system.”[16]

‘Take off your engineering hat’

The eve before the Challenger launch, after more than an hour of deliberating late into the night, it became clear to the Thiokol managers that they were not telling NASA what they wanted to hear, so they called for a “time-out” in the telecon.

Jerry Mason, the general manager at Thiokol’s Utah plant, began the caucus by addressing only his three vice presidents huddled around him, ignoring the 10 engineers seated around the same conference table, not to mention his SRM director’s (McDonald) specific instructions to provide a technical recommendation grounded in the facts. Mason softly announced, “We have to make a management decision.”

But the facts did not support a launch, so they struggled to reframe their “no-launch data” to find a rationale to fly. O-ring expert Boisjoly, who had led the early part of the telecon briefing, was apoplectic: He grabbed his color photographs showing the stark difference between the effects of hot gas on room- and cold-temperature joints and slammed them down on the table in front of the managers, “literally screaming” (Boisjoly’s words) at them to look at the photos and not ignore what they were illustrating.

Halfway through this caucus, Mason pointedly asserted, “Am I the only one who wants to fly?” and, when it was evident that the Vice President of Engineering Bob Lund was still standing firm on the technical/engineering ground McDonald had argued for, ordered him point-blank to “take off your engineering hat and put on your management hat.”

Shortly thereafter, Lund relented, and Mason called for a vote. “And a poll was then to be taken,” recalled Russell in testimony, then a young Thiokol engineer and assistant lead to the O-ring task force. “And I remember at the time wondering, if asked, and I remember that distinctly, what I would do, and whether I would be alone and whether I would have the courage, if asked, to stand up and say, ‘No.’ However, we were not asked, as the engineering people. It was a management decision at the vice presidents’ level.” Indeed, Mason only polled the four senior managers, deliberately excluding the 10 engineers, including Boisjoly, who were seated around the same table (see Appendix 4).[17]

When questioned why he only polled the managers, Mason, who had earlier characterized the telecon as “a free and open discussion, with all of the people there…,” incredulously offered, “We only polled the management people, because we had already established that we were not going to be unanimous,” which translates to: “We knew our engineering experts would disagree with us, so there was no need to ask them, and as managers, we were entitled to ignore them.” Even Boisjoly, in his Presidential Commission testimony, would defer to this hierarchy: “I never take away any management right to take the input of an engineer and then make a decision based on that input, and I truly believe that.”

Meanwhile, McDonald, Thiokol’s representative for the launch in Florida, had already let his NASA superiors involved in the launch decision chain know that he would refuse to sign off on the launch decision if the company indeed came back with a reversal in its position. He mentioned that there were multiple reasons to delay the flight—beyond the likely O-ring failure at frigid temperatures. McDonald was told, “Those are not your concerns,” but that they would be passed along in an advisory capacity to the NASA higher-ups. He also offered a prescient and chilling conclusion while awaiting the results of his company’s caucus, telling the NASA launch officials Mulloy and Stanley Reinartz: “If we launch tomorrow and something goes wrong on this flight, I sure wouldn’t want to be the person to stand up before a board of inquiry and explain why we launched this thing outside of what the motor is qualified to.” Refusing to sign his company’s launch authorization, which became the ultimate defense for Mulloy and NASA, was “the smartest thing I had ever done, though I was certain I had not endeared myself to my bosses back in Utah by that refusal, and had potentially risked my career at Thiokol.”[18] McDonald remains to this day the only person in American history restored to his job by a threatened Act of Congress (see Appendix 4, as well as an audio description of McDonald’s futile argument with the launch officials to not allow the launch to proceed.)[19]

Recall that the shuttle program received its funding authorization based upon a promise of flying “up to 60 missions a year,” when the true flight rate could realistically not exceed five to six per year. NASA accepted Thiokol’s bid largely based on its “sizable cost advantage” over NASA engineers’ concerns over its SRB O-ring joint design. Similarly, when faced with the prospect of fixing the flawed joint once its malfunctions came to light, no one wanted to stand up and take responsibility for halting the shuttle program, no group more so than Morton Thiokol, makers of the Rocket Booster. “We were in a true dilemma,” Russell explained. “If we had asked for the millions of dollars it would have taken to correct the design and fix the joint, NASA would have rightfully pushed back, asking us, ‘If it’s this bad, then why are we still flying??!’” (emphasis original).[20]

As a member of the 1986 Presidential Commission on the Space Shuttle Challenger Accident, Feynman eloquently remarked in his Addendum to the Presidential Report that the point of the Commission ultimately was:

… to ensure that NASA officials deal in a world of reality in understanding technological weaknesses and imperfections well enough to be actively trying to eliminate them. They must live in reality in comparing the costs and utility of the Shuttle to other methods of entering space. And they must be realistic in making contracts, in estimating costs, and the difficulty of the projects. Only realistic flight schedules should be proposed, schedules that have a reasonable chance of being met. If in this way the government would not support them, then so be it. NASA owes it to the citizens from whom it asks support to be frank, honest, and informative, so that these citizens can make the wisest decisions for the use of their limited resources. For a successful technology, reality must take precedence over public relations, for nature cannot be fooled.[21]

The same managerial norms that confer authority and power on positional leaders (e.g., the managerial prerogative) become the legitimizing norm to exculpate subordinates from exercising leadership in their own right, providing cover for not being responsible for “speaking out unless spoken to.” Beware invoking this principle. Use it sparingly and only if you are secure that your motivation for doing so is honorable and true, not just because you dislike the input or its implications.

Also, when you signal your preference for the outcome as the positional leader in the room (e.g., “Am I the only one who wants to fly?”), you are in effect shutting down the critical thinking capability and input from your subordinates, suppressing any potential disagreement and dissent from them—essentially testing their loyalty to the organization—and encouraging them to “go along to get along.”

The Space Shuttle Columbia: The Foam Impact and Debris Assessment Team

When NASA engineers examined flight film of the January 16, 2003, ascent of Space Shuttle Columbia, they were shocked to discover that a huge piece of foam had broken off from the bipod structure of the external tank, slamming into the front edge of the left wing (see Figure 3). A Debris Assessment Team (DAT) was created by the Shuttle Program Manager Ron Dittemore and the Chair of the Mission Management Team, Shuttle Program Integration Manager Linda Ham. The DAT was charged with determining the severity of the foam hit and any likely consequences. In the course of their deliberations, Rodney Rocha, a chief engineer for NASA and a DAT cochair, quickly realized that an accurate assessment would be impossible without obtaining in-flight imaging from a US spy satellite. This would involve getting the cooperation of the U.S. Strategic Command as well as altering some of the planned mission activities, but it was necessary and readily doable, so Rocha sent the request.

On flight day seven (January 22), Kennedy Space Center Flight Director N. Wayne Hale Jr., having been informed of the DAT’s need, reached out informally to a Department of Defense (DOD) colleague he knew to initiate the desired imaging request. As the CAIB Commission reported, “The Debris Assessment Team was asked to prove that the foam hit was a threat to flight safety, a determination that only the imagery they were requesting could help them make.”[22] When Ham found out about the request, however, she was furious, and set out to determine if there was any mandatory “requirement” for imaging[23] in this (unprecedented) circumstance. Vexed how U.S. Strategic Command (part of the DOD) could be involved without her knowledge or approval, she determined that there was no explicit requirement for imaging, relying on an assumption that foam loss had “never been a ‘Safety of Flight’ issue.”[24] As the CAIB concluded, “Shuttle managers exhibited a belief that RCC [Reinforced Carbon Carbon] panels,” where the foam impact occurred, were “impervious to foam impacts.”[25] Ham then informed the DAT that they would have to make their assessment and report its findings without the desired images. On January 23, she canceled the request, having a liaison apologize to the DOD and emphasize instead the need for a clearer procedure for the vetting of imaging requests:

The request that you received was based on a piece of debris…that came off shortly after launch and hit the underside of the vehicle. Even though this is not a common occurrence it is something that has happened before and is not considered to be a major problem. The one problem that this has identified is the need for some additional coordination within NASA to assure that when a request is made it is done through the official channels.…One of the primary purposes for this chain is to make sure that requests like this one does not slip through the system and spin the community up about potential problems that have not been fully vetted through the proper channels. (emphasis added)[26]

After reemphasizing that “future requests of this sort are confirmed through the proper channels,” Ham’s liaison asked that “no resources are spent unless the request has been confirmed” and closed by clarifying that the purpose of reaching out was to “eliminate the confusion that can be caused by a lack of proper coordination.”

Informed of the cancellation of the request, Rocha, concerned that the decision was wrong and “bordering on irresponsible,”[27] sent several emails regarding the ongoing analyses and details of the cancellation of the imaging request. At the same time, NASA Flight Director Steven Stich informed Columbia Commander Rick Husband and Pilot William “Willie” McCool about the debris strike to prepare them for an upcoming event with the nation’s press:

Rick and Willie,

You guys are doing a fantastic job staying on the timeline and accomplishing great science. Keep up the good work …

There is one item that I would like to make you aware of for the upcoming [Public Affairs Office] event on [flight day] 10 and for future events later in the mission. This item is not even worth mentioning other than wanting to make sure you are not surprised by it in a question from a reporter. [emphasis added]

Experts have reviewed the high speed photography and there is no concern for [reinforced carbon–carbon] or tile damage. We have seen this same phenomenon on several other flights, and there is absolutely no concern for reentry…

Stich’s understanding that the foam hit was insignificant came from a preliminary computer modeling analysis of the debris impact he received from an engineer whose area of expertise, it turned out, was not related to where the foam hit but rather on the thermal tiles on the shuttle’s underbelly, which was mistakenly presumed to have been the area impacted by the foam. This engineer relied erroneously on a model that was designed to simulate and predict the impact of micrometeorites (1" x 4" maximum size) in space, not a briefcase-sized chunk of foam travelling at a relative speed of more than 500 miles per hour. The analysis wasn’t questioned because it coincided with the intentions and assumptions of the shuttle program managers that foam never had and did not comprise a flight safety issue.

Two days before Columbia was due to return, on January 30, NASA engineer Robert Daugherty emailed a mechanical systems colleague about main gear breach concerns, indicating,

Before I begin I would offer that I am admittedly erring way on the side of absolute worst-case scenarios and I don’t really believe things are as bad as I’m getting ready to make them out. But I certainly believe that to not be ready for a gut-wrenching decision after seeing instrumentation in the wheel well not be there after entry is irresponsible.

Daugherty went on to describe in frighteningly accurate detail the precise cascading step-by-step failure that the Columbia crew would indeed face just two days later:

…(5) Do you belly land? … (6) If a belly landing is unacceptable, ditching/bailout might be next on the list. Not a good day.…Admittedly, this is over the top in many ways, but this is a pretty bad time to get surprised and have to make decisions in the last 20 minutes.

The worst fears of Daugherty, Rocha, Hale, and others were confirmed when in the early morning hours of February 1, 2003—and barely 15 minutes from their scheduled landing at Kennedy Space Center—astronauts Husband, McCool, and their five crewmates perished on their way home as the 3,000°F heat of reentry breached a basketball-size hole in the left wing, causing the orbiter to disintegrate 200,000 feet above the earth (see Figure 3).

It was a hole that would have been totally obvious if the imaging request had been honored and allowed to proceed. Simply put, their deaths could have been prevented.

Cultural effects on communication

The CAIB investigation also found striking parallels in the communication failures between Challenger and Columbia, the causes for which share a remarkable resemblance to commonplace effects in organizational and managerial dynamics that you may be familiar with. CAIB noted that the initial shuttle design “predicted neither foam debris problems nor poor sealing action of the Solid Rocket Booster joints. To experience either on a mission was a violation of design specifications.”[28] But instead of these anomalies being treated as signs of potential danger when they first came to the attention of the shuttle program managers, they were deemed to be “acceptable flight risks”; their deviance was not only tolerated but expected and even normalized.

In both scenarios, as we have seen, strict adherence to NASA’s hierarchy blocked effective communication. Signals were overlooked, people were silenced, and useful information and dissenting views of technical issues did not surface at higher levels, and when they did, they were promptly dismissed. What was successfully communicated to parts of the organization was that O-ring erosion and foam debris were not problems[29] —due to the intense schedule and cost pressures discussed above. Organizational superiors in both instances discouraged or prevented open, honest communication and squelched dissent. The party line vision created a performance-oriented can-do culture leading to flawed decision-making and diminished curiosity and critical thinking. Their resistance to doubt and their inability or, more appropriately, unwillingness to imagine not only the likelihood of being wrong, but the severity of the consequences, made the ultimate outcomes and untimely deaths of these 14 astronauts seemingly inevitable.

The CAIB report emphasized the lack of foresight on the part of organizational leadership as well as their responsibility for creating the culture that prized curtailing costs and achieving unrealistic results. “Leaders create culture. It is their responsibility to change it.”[30]

Contrasts in a leader’s conscience

In implicating the all-too-familiar presence of traditional organizational dynamics and managerial practices as root causes of NASA’s shuttle disasters in the above descriptions, let us pause for a moment to examine the striking contrast between two of the key senior managers involved in both decision processes: Dr. William R. Lucas, head of NASA’s Marshall Space Flight Center at the time of Challenger, and N. Wayne Hale Jr., the shuttle program flight integration manager (and KSC flight director) at the time of Columbia.

Lucas met with his subordinates, Reinartz and Mulloy, in his hotel room on Merritt Island, Florida, immediately before they departed for the January 27 telecon with Thiokol. They informed their organizational superior—who was not formally in the launch decision chain—that Thiokol had already let them know that it would be opposing the launch based on the record cold temperatures predicted at the launch site. In a 1991 interview, Mulloy recalled that Lucas commented, “That sure is interesting: We get a little cold nip, and they want to shut the shuttle system down? I sure would like to see their reasons for that!”[31] This set up a dilemma for the men from Marshall: By accepting a delay on Challenger, when NASA had already announced accelerating the flight rate to 15 missions in 1986 and up to 24 by 1990, the program would be slowed down and, more immediately, would incur their boss’s wrath.

According to a senior manager at Marshall known only as “Apocalypse,” Lucas had consistently made it known that “under no circumstances is the Marshall Center to be the cause for delaying a launch” (see Appendix 5).[32] As Apocalypse explained:

I am a senior manager at the Marshall Space Flight Center [MSFC] and have served as a member of the MSFC Flight Readiness Review Board for all twenty-five shuttle launches. I have watched in despair as William R. Lucas, MSFC Center Director, has lied time and time again in national news conferences. I have watched in disbelief as he has engineered a coverup of the MSFC involvement. When I add to this the deep concerns that I and many other senior managers secretly shared preceding the launch relating to the management philosophy associated with determining flight readiness, my conscience compels me to speak out. [emphasis added]

The Marshall Space Flight Center is run by one man, William R. Lucas. His style of management can best be described as feudalistic. In his ten year tenure as Center Director, he has established a personal empire built on ‘the good old boy’ principle. The only criteria for career advancement is total loyalty to this man. Loyalty to country, NASA, the Space Program, mean nothing. Many a highly skilled manager, scientist, or engineer has been ‘buried’ in the organization because they underestimated the man’s psychopathic reaction to dissent. [emphasis added]

Clearly in dismay over his (and his colleagues’) failure to stand up to Lucas and thus prevent the death of the Challenger astronauts, Apocalypse confessed, “In my own case, I have played the game. I have learned not to debate with him, not to argue, but to compliment and flatter him. We have learned that we must tell him he is right even though he is wrong in order to survive.” He continued:

We are flying shuttles based on the flawed management philosophy that if no one can prove the hardware will fail, then we launch.

And, we vote. It is understood that Lucas expects his board to vote for launch. In the twenty-five center level flight readiness reviews that I have participated [in], never has there been a single negative vote from a Marshall Board Member or a Marshall Contractor that we are not ready. This amounts to hundreds of Yes’s and not a single No. This speaks for itself. This process is an exercise in self-disillusionment and illustrates the tremendous gap between MSFC and NASA Management decision making and what happens in the real world. The Contractors understand that Lucas can get them fired, hence they tell NASA what they know NASA and MSFC wants to hear. ‘We are ready.’… Lucas proceeded at risk rather than have the MSFC delay the program, a classic management error in shortsightedness.

God Help Us All.

Apocalypse.

If the above assessment is valid, then Lucas’s “cold nip” comment to Mulloy and Reinartz would have been construed as a directive to approve the launch, despite any objections from Thiokol. Even years later, with the benefit of hindsight to prove the error in their judgment, Mulloy would insist, right up to the very end of his life in 2020, that they made the “logical decision” based on what they knew at the time—a logical decision with a tragic result—and that he was the willing “lightning rod” or “spear-catcher” and scapegoat for his higher-ups’ choices.[33]

Contrast Lucas’s creation of a toxic climate at Marshall (and Apocalypse’s acquiescence to it: “We must tell him he is right even though he is wrong in order to survive”) with the 2004 messaging of Hale on the eve of the one-year anniversary of the Columbia Disaster. (Recall that it was Hale’s outreach to the DOD after the foam debris strike on Columbia that had initiated the imaging request procedure that Ham had subsequently, and abruptly, ended.) In a systems-wide communication with the space shuttle team at the Johnson Space Center, under the subject, “Adjusting Our Thinking” (see Appendix 6),[34] Hale wrote:

I have been doing a lot of thinking lately: the approaching anniversary of the Columbia accident, reading the new book on the accident, the incessant questions from the press, …and most importantly thinking about the new policy and direction from our leaders. Like many of you I have had some mixed emotions from all of this. I would like to share some of my thoughts with you.

Hale went on to present a lofty and inspiring vision of space exploration that he “signed onto…as a schoolboy,” and compared it to the Olympic Torch Relay:

Each person and each program holds the flame of exploration and progress high for an allotted portion of the route, and then the torch is passed to the next runner in the relay….The goal of exploring and settling the solar system will not be completed in our lifetime or our children’s lifetime. But we—here and now—are called to run our lap with skill, dedication, vigilance, hard work, and pride.

Further in his letter, Hale introduced the idea that the foundation for all the work ahead for NASA “is to fly the shuttle safely.” This was written while the fleet was still grounded due to the Columbia accident, and the next flight would not occur for another year and a half (July 2005). By September 2005, Hale would be promoted to head of the entire shuttle program.

In his 2004 missive, Hale wrote, “We have been given a great mandate. Those of us who are in the shuttle program now will be required to help the next generation succeed….We must make sure that the next launch—and landing— and those that follow are safe and successful. That will be our finest contribution to the future, carrying the torch ahead.” He closed:

A worker at KSC told me that they haven’t heard any NASA managers admit to being at fault for the loss of Columbia. I cannot speak for others but let me set my record straight: I am at fault. If you need a scapegoat, start with me. I had the opportunity and the information and I failed to make use of it. I don’t know what an inquest or court of law would say, but I stand condemned in the court of my own conscience to be guilty of not preventing the Columbia disaster. We could discuss the particulars: inattention, incompetence, distraction, lack of conviction, lack of understanding, a lack of backbone, laziness. The bottom line is that I failed to understand what I was being told; I failed to stand up and be counted. Therefore look no further; I am guilty of allowing Columbia to crash.

One of the more powerful insights that our workshop participants have appreciated is a favorite quote McDonald would share from the late journalist Sydney J. Harris: “Regret for things we did is tempered by time,” and then he would invariably pause before adding, “but regret for things we did not do is inconsolable.” Consider how this form of regret weighed on Hale, as he exhorted his colleagues to do their best for the future of the Space Program:

As you consider continuing in this program, or any other high risk program, weigh the cost. You, too, could be convicted in the court of your conscience if you are ever party to cutting corners, believing something life and death is not your responsibility, or simply not paying attention. The penalty is heavy, you can never completely repay it.

Do good work. Pay attention. Question everything. Be thorough. Don’t end up with regrets.