During the early days of the COVID-19 pandemic, my oldest daughter had been learning to drive, which had allowed me the honor of riding in the car alongside her. On the one hand, I was thrilled at the opportunity to experience this milestone with her; on the other, I was overwhelmed thinking about every facet of driving a vehicle that she’d surely have to master and that I’d been charged with somehow teaching her.

At the time, we lived in Denver, Colorado. So, we drove a few miles to an empty parking lot of the stadium where the Colorado Rapids, the Major League Soccer team, play their matches—a location, I might add, where we’d feel confident that very few people would be driving. I pulled into a parking spot, and we switched seats. I coached her on the art of going straight, changing lanes while using the turn signal, and stopping at a stop sign. I said to her, “Slowly ease out onto the stretch of the road in front of you and drive straight, keeping your eyes on the road and looking at what’s right in front of you. Change lanes at least once using the turn signal, and drive until I tell you to stop.”

She seemed to understand the terms I used as I gave my instructions, trusting what I knew about driving a vehicle. She complied with my instructions exactly. Yet, after a few minutes of her driving, I said, “Stop the car, please. I’m getting dizzy. You aren’t driving straight on your side of the road. And when you changed lanes, I didn’t see you check your blind spot. What exactly are you looking at while you’re driving?”

Somewhat exasperated, she said, “I’m trying to look at the road right in front of me.” Then she looked at me. I’ll never forget the puzzled look on her face, and I’ll never forget the question she asked: “What’s a blind spot, and how do I check it?”

That question taught me a profound lesson: If we’re only looking at the road right in front of us, we won’t ever see where we’re going. If we don’t focus on seeing where we’re going, we aren’t going to reach our destination. That all seems obvious, sure. But if we don’t learn about blind spots and how to check them, we won’t anticipate what might catch us by surprise! That knowledge and skill could very well help us avert misfortune.

So, I emphasized, “It’s less about looking at the road right in front of you and more about seeing down the road—anticipating what’s in front of you. More importantly, it’s about spotting the unexpected situations and adapting to them. Blind spots are those completely unexpected situations, the moment of unknowns, that can neither be easily foreseen nor can they be entirely prevented. But learning to check your blind spots often will position you for greater success while driving.”

What I figured had been a relatively decent driving lesson had turned out to be an even greater lesson in rethinking complex and unexpected situations, which require strategies to confront them proficiently. Since then, I’ve contemplated important ideas that had been brought to life during the driving lesson that day, ideas that can be applied not only effectively but also meaningfully to compliance practices.

Blind spots in compliance

Despite substantial preventative measures, compliance offices and research programs confront emerging complex and unpredictable situations. Traditional compliance approaches—instituting policy and procedure reform, addressing the weightiest of compliance risks, and imposing stronger compliance mandates and oversight—cannot alone prevent noncompliance and other types of wrongdoing in fluctuating environments. Too often, the most challenging of compliance entanglements are unforeseen. For this reason, compliance professionals must seek ways to innovate how they approach and practice compliance. Because when we search for ways to enhance our practices in our diverse areas of compliance, we discover how to see those areas in ways we likely haven’t previously. We uncover aspects of compliance that either give new meaning and perspective to our professional worlds or reveal something unexpected and imperceptible—something we hadn’t even realized we should’ve been focusing on all along or why. The reason we hadn’t even realized it is because we have blind spots.[1]

Even still, we must learn to outmaneuver unanticipated and complex situations. We must learn to spot the blind spots—the gaps, weaknesses, and overconfidence in our daily compliance practices that could lead to misfortune. Fortunately, there are some simple strategies that can be used regularly to check and reduce blind spots and, ultimately, improve compliance oversight.

Examine implicit assumptions

At times, as compliance professionals, we tend to jump prematurely to conclusions about if events of a situation have led to noncompliance. Perhaps we either haven’t objectively considered the facts of the events, or we’ve centered our thinking on a personal choice or viewpoint about what noncompliance looks like. In essence, implicit assumptions don’t generally help us to expand our awareness, become attuned to our blind spots, and avert potential disaster. But we can examine and test our implicit assumptions to be better equipped when approaching compliance issues—especially when the issues not only cover more than one area of compliance but also introduce unforeseen complexity.

Failure to carefully examine implicit assumptions is a sure way to be blindsided in complex compliance situations. In many cases, these assumptions can be incredibly difficult to identify because they derive from understanding or expectations that aren’t fully articulated within a particular context. Often, they underlie assessments, courses of action, decisions, or judgments that aren’t explicitly voiced. In areas of research compliance, for example, implicit assumptions might be that principal investigators don’t want to be bothered with compliance because they only care about research data, career advancement, or professional recognition. Or research teams don’t dedicate enough time to training and education in research best practices or compliance. And yet, being guided by these assumptions frames compliance practices and influences how noncompliance issues are identified and subsequently addressed.

Now, implicit assumptions aren’t inherently evil. In fact, implicit assumptions stem from education and experience, providing a framework that can be helpful when assumptions are verified. Although they aren’t evil, implicit assumptions remain limited and, therefore, incomplete. That’s precisely why we must examine them carefully to avoid drawing incorrect conclusions—making flawed assessments and determinations, that is—about compliance or noncompliance.

Three types of bias underlie implicit assumptions. First, confirmation bias manifests itself in the automatic, mental shortcuts people use—often without awareness, intention, or control—to make decisions quickly or judge a situation. It’s rooted in people’s background, beliefs, education, and experience, and it’s expressed rationally and reinforced when new information is observed that matches up nicely with the preconception. Next, overconfidence bias results from an unjustifiable self-assurance or optimism bias. It distorts how people react to the magnitude and complexities of a situation. Lastly, anchoring bias hinders the ability to objectively evaluate and incorporate new and subsequent information. In other words, people rely exclusively on one piece of evidence or data to inform their perspective, render judgement, and make decisions. Because of how they could sway our actions, we would do well to intentionally examine these three biases, so we can more readily spot the blind spots in our areas of research compliance.

In practice, for instance, consider a compliance situation where the stakes are high and emotions tense. Documentation errors in research records have resulted in potentially serious noncompliance. Now imagine assessing the error-ridden research records because of the reported noncompliance. It’s noted that two research professionals have overseen the research study in question: one who’s been recently onboarded to the research team and is still learning the processes and systems at the research institution, and one who’s a seasoned research professional with extensive experience in the field and has been assigned to mentor and train newcomers to research. But only one of the research professionals has committed repeated record-keeping errors resulting in noncompliant records. Now, which research professional have you suspected to be the one keeping noncompliant records?

It’s natural to immediately conclude that the newly onboarded research professional was the one who erroneously documented research activities and committed noncompliance. Education, experience, and training suggest that the seasoned research professional would surely have more compliant documentation and a higher quality work product. But that’s implicit bias against any efforts to work through the complexities of the compliance situation.

While I examined connections between the effects of bias and spotting blind spots in compliance, I met with a colleague and consultant in research compliance. He illustrated the overconfidence bias masterfully while discussing these connections. He reverted to a quip from the consulting world that declares that someone has easily become an expert when someone has completed a project. “That’s absolute nonsense. If you’ve seen one research program,” he emphasized, “then you’ve seen one research program.” From our discussion, this notion became even more apparent that we must test our implicit assumptions, regardless of what we’ve experienced as professionals. And sometimes, it means seeking insight from unconventional sources, like your oldest daughter while trying to teach her to drive a car.

Test implicit assumptions

There are significant reasons why it’s essential to test implicit assumptions. On the one hand, the importance rests on the questions presupposed in the assumptions—questions that could help uncover potential blind spots and could point professionals in the direction of where they might need to seek insight and perspective. On the other hand, it’s vital to overcome cemented thinking by testing the assumptions. That requires professionals knowing what they’re assuming, why they’re assuming it, where they should seek insight, and how they can evolve their thinking.

Testing begins with having plausible theories. Then from those theories, compliance professionals should formulate simple questions that challenge them to look for the contradictions to discover alternative possibilities. That way, they can figure out where they must focus their compliance attention. Lately, it seems that answers to complex, challenging situations have tended to reveal themselves when simple questions have been asked and tested. The challenge comes when cemented thinking (overconfidence and anchoring bias) tries to override the testing process.

Therefore, to check the blind spots of implicit assumptions, form questions that prevent immediate action based on theories: What other information or details do I need before acting? What have I observed that has led to the assertions I’ve made? How could I bring perspective and objectivity to my theory of the situation? Questions like these can help compliance professionals contemplate their thinking when the stakes are high and emotions strained concerning noncompliance.

But success in overcoming cemented thinking depends entirely on identifying unconventional sources of insight and perspective. At times, the expertise—the curse of knowledge—we’ve acquired in a particular specialty can be the enemy of creative insights and creative thinking. One of my favorite ways of thinking about finding creative insight comes from Steve Jobs. He’s quoted as saying, “Creativity is just connecting things. When you ask creative people how they did something, they feel a little guilty because they didn’t really do it, they just saw something. It seemed obvious to them after a while. That’s because they were able to connect experiences they’ve had and synthesize new things. And the reason they were able to do that was that they've had more experiences or they have thought more about their experiences than other people.”[2]

Seeking unconventional insight is similar to finding creative insight. It’s about connecting experiences we’ve had and synthesizing them into new ways of looking at things—old and new problems. That’s why it’s invaluable to seek out and have more experiences with unconventional sources of information and insight: so that those experiences push us to see things in a fundamentally different way. I also love how Steve Shapiro describes a way in which we can see things in a fundamentally different way when we seek insight from unconventional sources. In his TEDxNASA talk, Shapiro offers an alternative approach to testing our implicit assumptions: “If you’re working on an aerospace challenge, and you have 100 aerospace engineers working on it, the 101st aerospace engineer is not going to make that much of a difference. But you add a biologist, or a nanotechnologist, or a musician, and maybe now you have something fundamentally different.”[3] With Jobs’ and Shapiro’s insights to guide our thinking, the pivotal question to ask, then, should be: Who else might have solved a similar problem I’m facing? Because when we engage with unconventional sources of insight, we become that much more attuned to checking our blind spots.

As professionals in compliance, when we know something—say the best practice, process, policy, or regulation—that’s when we hold tightly to implicit assumptions about “how we’ve always done things around here,” “the best practice has always been this way,” or “the way things have always worked.” However, we should also beware of the flaw: When we know something, it’s painfully difficult to imagine a time when we didn’t know it. In fact, we’re often flabbergasted when others don’t seem to know it or why—let alone understand it. It’s perfectly fine that we create and have templates, best practices, and operational models in research. But the drawback that should be acknowledged is that sometimes as professionals, we dismiss critical, independent thinking when we rely too heavily on the best practices, templates, and models because we don’t believe they should ever evolve. Then they become cemented and sacred—dare I say it, worshiped.

Consequently, we don’t stay curious about the effectiveness of practices, templates, and models to keep experimenting with them. Harold Kerzner, author of Project Management Metrics, KPIs, and Dashboards: A Guide to Measuring and Monitoring Project Performance, brilliantly puts into words the idea of evolving practices: “[A]fter acceptance and proven use of an idea, the better expression possibly should be “proven practice” rather than a best practice. This leaves the door open for further improvements.”[4]Another strategy could prove valuable to spot the blind spots in established best practices.

Use prospective hindsight

At a spectacular rate, outcomes in complex compliance situations too often don’t end well. One contributing factor is that many compliance professionals tend to jump to the wrong conclusions prematurely and then make noncompliance declarations. There’s a way to counteract jumping to the wrong conclusions too quickly: use prospective hindsight. Research has shown that imagining events as if they’d already occurred—especially the events’ hypothetical opposites and consequences—is one of the most valuable strategies to improve outcomes. In their research findings in 1989, Deborah J. Mitchell of the Wharton School, Jay Russo of Cornell, and Nancy Pennington of the University of Colorado discovered that prospective hindsight—imagining that an event has already occurred—increases the ability to correctly identify reasons for future outcomes by 30%.[5]

Using prospective hindsight means thinking through hypothetical opposites. As a method described in Mitchell’s and her colleagues’ research, it requires performing a premortem assessment. Think postmortem and autopsies, but the opposite. There isn’t any waiting for noncompliance to occur. Although an after-the-fact assessment of noncompliance would unveil the actual noncompliance so it could be prevented in the future, a premortem of noncompliance would anticipate it before it had even occurred—before the research study had ever begun. In other words, outcomes resulting in research noncompliance don’t end up on the postmortem examination table.

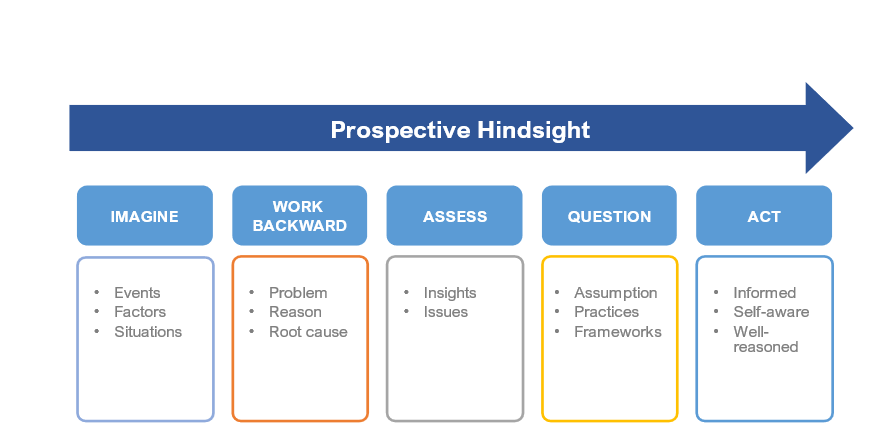

The process of thinking through hypothetical opposites works like this: Imagine that some fashion of noncompliance has resulted—a disaster, even failure. Imagine the adverse, unfavorable events, factors, or situations that will surely result in that noncompliance. Now, work backward to understand the reasons—the root causes of the noncompliance. Unlike planning compliance audits, where professionals might choose areas to audit based on noncompliance trends, the process of hypothetical opposites operates on the assumption that noncompliance has indeed occurred and led to failure. The process compels us to ask genuinely what did go wrong. Then the task is to generate plausible reasons for the failures that led to noncompliance. Working with hypothetical opposites means, in other words, imagining that noncompliance has resulted and retracing the actions and events to uncover the roots of that noncompliance, assessing the issues, and gaining insight (see Figure 1).

Thinking in hypothetical opposites has several strategic advantages in compliance. For one thing, it tempers overconfidence, which in turn grounds compliance professionals in making more realistic assessments of noncompliance. It also helps to think about and prepare for contingencies—to see connections in creative ways to spot issues that might have otherwise been overlooked. Furthermore, the process can highlight factors that could influence success or failure or determine potential noncompliance, which may enhance the ability to implement countermeasures. Lastly, it underscores just how critical it is to be informed and self-aware of the potential pitfalls to avoid as a way of spotting blind spots of noncompliance and planning for the unknown. Not only does this strategy help evolve thinking beyond the stages of cementing best practices, but it also can be instrumental in testing implicit assumptions.

The effectiveness of this strategy hinges on resisting the temptation to rely on implicit assumptions but instead testing them. If compliance professionals must assume something has failed because of noncompliance, then they will have to challenge their thinking by pinpointing what might account for that. Yet another opportunity to detect some potential unknowns and identify the blind spots in compliance. Challenging our thinking naturally prompts an evaluation of a compliance program’s effectiveness. From there, we can act according to the facts and influential factors that might result in success rather than failure; we can more effectively spot the research compliance blind spots. And that’s how best practice thinking evolves.

Conclusion

In the compliance profession, we must challenge our assumptions and mental frameworks about complex compliance situations. We must learn to spot the potential blind spots hiding between an assumed reality and an actual reality. It’s important to reiterate that we should rely on our compliance framework. But we should also challenge our best practice thinking by seeking unconventional perspectives to gain insight into what actions should be taken so that we can evolve our thinking and practices. We do this when we experiment with ideas and possibilities. It’s how we can better spot blind spots.

If, as professionals, we could magically choose when we’d confront complex compliance situations, we’d maximize the opportunity with an innovative approach and minimize our stress. We’d opt for when we could control the circumstances influencing the situation to ensure a successful outcome. That magical moment would mean we wouldn’t be susceptible to blind spots. However, the complexity of the compliance landscape today doesn’t offer too many magical moments where blind spots are entirely avoidable. Realistically, perfectly controlling a compliance situation without blind spots is impossible because having perfect insight to maintain compliance in a situation only occurs in hindsight. It’s challenging to manage the unpredictable unknowns; we’re likely to be blindsided unless we learn to spot the blind spots in research compliance.

Takeaways

-

Learn strategies to identify blind spots in areas of research compliance.

-

Examine and test implicit assumptions to avoid prematurely making noncompliance declarations.

-

Use prospective hindsight to be more self-aware of potential compliance pitfalls.

-

Reconsider compliance plans and areas of risk by incorporating hypothetical opposites.

-

Seek unconventional insight for creative alternatives when facing complex compliance situations.